From the basic linear algebra, we know what is the matrices and how it represents the equations. I always wonder how I can relate the neural networks with the basic math. Here is the inference with an example.

Do you know that the neural networks which is one of the most successful and powerful machine learning models which is used for numerous applications, is largely based on the matrices and matrix products.

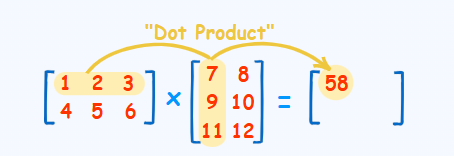

Let us understand simple neural network which actually works with the dot product.

Imagine that you have a spam dataset and in this spam dataset, you’ve pinpoint two words that are quite deterministic for spam, which are the words Offer and Free. These seem to appear more on spam emails, but of course the appearance doesn’t guarantee that the email is spam. You’ve counted the number of appearances in the emails that are spam or not spam and got this table.

Now the goal is the following. You want to build a spam filter, the best possible spam filter. That is called a classifier, which is some little machine that will try to guess if an email is spam or not based on the contents on this table. This particular classifier is going to work in the following way. You assign a score to the word Offer and a score to the word Free, then you calculate the score of a sentence by adding the scores of the words with repetition.

For example, if the score for the words Offer and Free are 3 and 2 then the sentence “Offer, Offer the package is free” we have 2 offer words in this sentence and 1 free word which means 2 * 3 + 1 * 2 = 8. Now the rule to guess if an email is spam or not is the following. If the number of points of the sentence is bigger than some amount called the threshold, then the email is classified as spam. Otherwise, it’s not. We have to determine the best possible threshold for the filter so that the above table works perfect.

This does not mean we would be classifying all of the spam mails to spam but our goal is find the best possible classifier so that it does not miss the spam emails from finding. That means one that fits the results of the table as well as possible. In other words, you want to find the best score for the word offer and for the word free and for the threshold in such a way that the results of the classifier are as close as possible to the spam column of the table. In the above table, there are 3 rows which says the spam as no. So the best possible threshold to satisfy the data given would be 1.5.

When you check the number is larger than equal to the threshold of 1.5, the column of the answers is exactly the same column that records if the email spam or not. This is the perfect spam filter for that particular dataset. That means the classifier did a pretty good job in the dataset that it was given. Now this is called natural language processing because the input was language, it was words, and it used these words to make a prediction.

Let us plot the graph to understand it better

the line represents the threshold value. If the data points that fall right side will be spam and within would be not spam. The positive region is the one for which the score in the sentence is larger than the threshold, and the negative region is that one for which the score of the sentence is smaller than the threshold. This is precisely a linear classifier, and it's actually the simplest neural network. It's a neural network with one layer. Also note that the model does make sense. The more appearances of the words offer and free, the higher the score in the sentence and the more likely the email is spam. One layer neural network can be seen as a matrix product followed by a threshold check.

the model is considered as 1 for those 2 words that determines the spam. So the dot product of the model with the row consider 2nd row here. The value is 3 which is greater than 1.5 threshold and so it is considered as spam. Now if we have more word the columns in the data set would increase and rows in model would be 1’s for all of them. This work same as before.

Here’s another way to look at this classifier. The equation to check for spam is that the score of the sentence is bigger than the threshold, which is 1.5. But that’s the same thing as saying that the score -1.5 is larger than zero. In this case, this -1.5 is called the bias. The way to include this into the matrix multiplication is to add an entire column with the number 1 in the data table and a role with the bias with a -1.5 in the model. Now instead of checking if the results is larger than the threshold of 1.5, we only have to check if the modified score is positive or negative. That gives us the exact same classifier. Sometimes you’ll see classifiers with the bias and sometimes with the threshold. For more complicated neural networks, the bias tends to be more common.

Let us consider the AND operator. The dataset is actually similar. It just has 4 rows of the previous dataset with the same labels except instead of spam and not spam we have Yes or No. What happens if we model the AND operator and get the Dot product. Now if we apply threshold check or the bias to the dot product We get exactly same output.

In this diagram, the number inside the node gets multiplied by the weight in the edge, and then they get added in the next node, then the activation function is applied. The activation is precisely, the check. It’s the one that returns a one or a yes if what it comes is non negative and a zero or no if what it comes is negative.

Using variables, this is the perceptron that appears in the lab. The input is x coming from the dataset. The weights of the model are the Ws and the bias is b. Inside the node, there appears a dot product that is added to the bias. The output of the node then gets passed the activation function and that generates the output of the perceptron.

Hope this article helped in understanding the Perceptron in the linear algebra perspective.